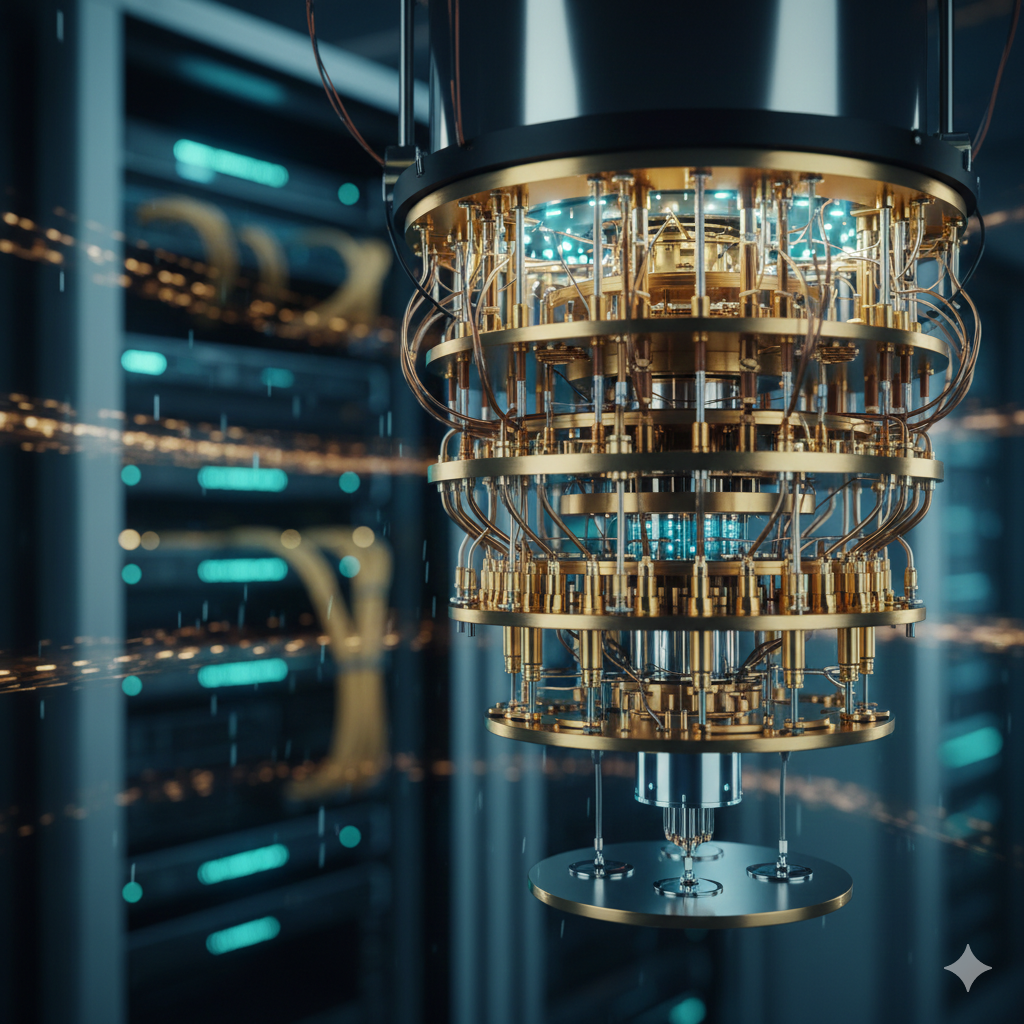

The barrier to entry for quantum computing has long been defined by extreme physical requirements. Dilution refrigerators, near absolute zero temperatures, and highly specialized environments confined meaningful access to a handful of nation-states and trillion-dollar corporations. That model is now beginning to fracture. The emergence of Quantum-as-a-Service is fundamentally reshaping the landscape, shifting quantum power from exclusive infrastructure to an accessible cloud-based capability.

This change represents more than operational convenience. It is a strategic unlock for mid-market enterprises that previously sat outside the quantum conversation. By offering remote access to quantum processing units through standard cloud subscriptions, providers are enabling companies in logistics, pharmaceuticals, and finance to experiment with optimization and simulation problems that were previously out of reach. Ownership of hardware is no longer the advantage. Mastery of quantum algorithms is.

One of the clearest signals emerging from early deployments is the rise of hybrid workflows. Most adopters are not attempting to replace classical systems. Instead, they are embedding quantum computation into specific, high-complexity segments of existing processes. In materials science, for example, classical systems manage broad simulations, while quantum processors are used selectively to model molecular interactions at a subatomic level. This targeted use allows organizations to capture quantum benefits without committing to fully quantum-native architectures.

This hybrid approach reflects the practical reality of the current cycle. It lowers risk, reduces cost, and accelerates learning. At the same time, the growing standardization of quantum software development kits is lowering the technical barrier for classical developers. Engineers can now explore superposition-based computation without deep backgrounds in quantum physics, accelerating experimentation across a wider talent base.

The democratization of quantum capability, however, introduces a parallel layer of strategic risk. As access expands, the timeline for quantum-enabled cryptographic disruption becomes harder to predict. The point at which quantum systems can reliably compromise existing encryption standards is no longer a distant theoretical milestone. Organizations are being forced to confront post-quantum cryptography earlier than anticipated, balancing innovation gains against long-term security exposure.

Capital allocation is already adjusting to this reality. Investment is shifting away from hardware-centric quantum startups toward software platforms that manage orchestration between classical and quantum environments. The emerging value layer is middleware. These systems translate quantum output into usable business intelligence and integrate it into existing workflows, making quantum power practical for non-specialist teams.

Looking ahead, cloud infrastructure is likely to split into two distinct tiers. One will remain general-purpose, optimized for everyday enterprise operations. The other will evolve into a high-performance quantum edge, designed for research, optimization, and simulation-intensive workloads. The organizations best positioned for the next decade will not be those chasing quantum hardware headlines, but those building internal capabilities to identify which problems are suitable for multi-state computation. The decisive factor is no longer access to quantum machines. It is the readiness of data, processes, and talent to operate in a fundamentally different computational paradigm.